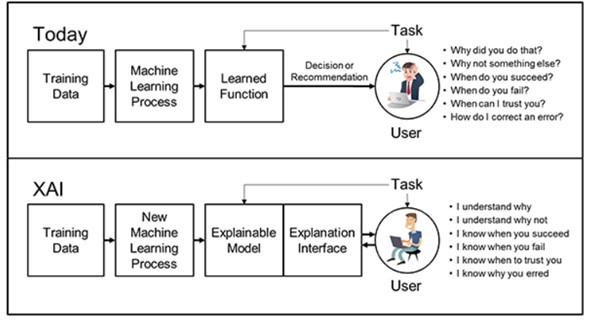

Artificial Intelligence (AI) systems, particularly deep learning models, have revolutionized numerous sectors with their unprecedented performance capabilities. However, the intricate structures of these models often result in a "black-box" characterization, making their decisions difficult to understand and trust. Explainable AI (XAI) emerges as a solution, aiming to unveil the inner workings of complex AI systems. This paper embarks on a comprehensive exploration of prominent XAI techniques, evaluating their effectiveness, comprehensibility, and robustness across diverse datasets. Our findings highlight that while certain techniques excel in offering transparent explanations, others provide a cohesive understanding across varied models. The study accentuates the importance of crafting AI systems that seamlessly marry performance with interpretability, fostering trust and facilitating broader AI adoption in decision-critical domains.