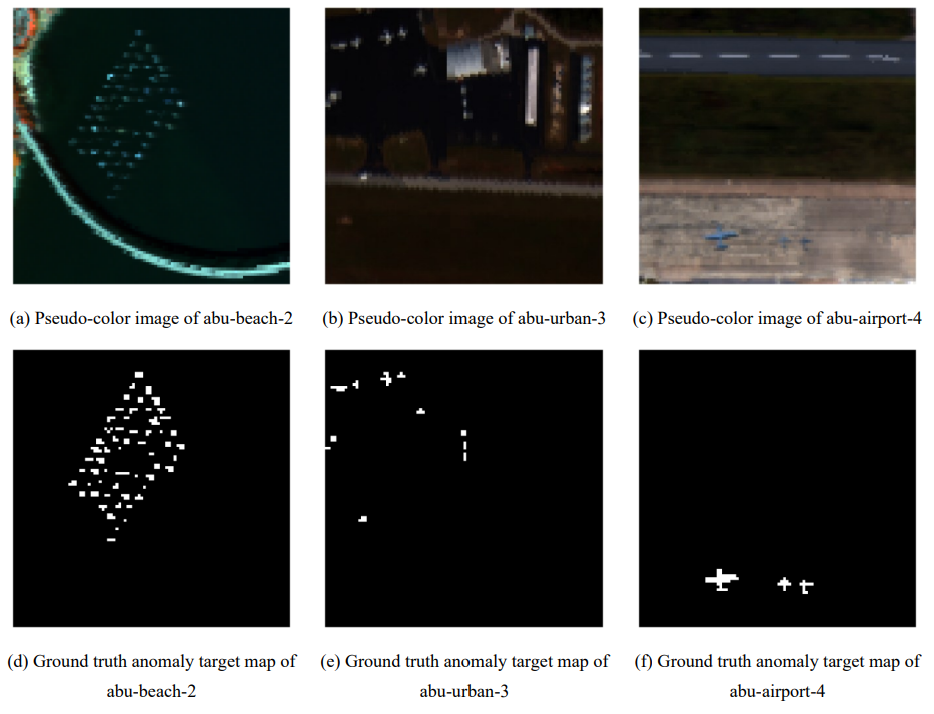

Hyperspectral anomaly detection is a key task in the field of remote sensing, which aims to identify targets with significant spectral differences from the background without prior knowledge. Traditional methods insufficiently characterize the sparsity of anomalies and are susceptible to background noise interference. This paper introduces the existing advanced low-rank denoising technique, Global and Nonlocal Low-Rank Factorization (GLF), for anomaly detection as a background modeling tool to obtain residual images. In the residual processing stage, a variety of nonconvex penalty functions are systematically adopted to replace the traditional L2, and anomaly score maps are generated through pixel-wise aggregation to more accurately approximate the sparse distribution of anomalies. Experiments on multiple ABU datasets show that the AUC of the proposed GLF-NC is significantly superior to classical methods such as RX, RPCA-RX, and LRASR. Transferring GLF to anomaly detection combined with nonconvex penalties can effectively improve detection accuracy, verifying the effectiveness of the method in anomaly enhancement and background suppression.